Introduction

My last two blog posts were about creating docker images for an ASPNet/Javascript web application. In the first post, I described considerations to produce an optimized image. The second post was about creating a CI/CD process for producing docker images.

Though producing nice lean docker images is good karma, they need to be deployed to a container orchestration system to be deployed in a productionized environment.

The three most popular container orchestration system are Kubernetes, Mesosphere and Docker Swarm. Of these three, Kubernetes is arguably the most popular and we are going to use it to run our container. Running your own kubernetes cluster in a production environment is ostensible. Luckily all major cloud vendor provide kubernetes as a service e.g. Google Cloud has a Google Kubernetes Engine, Amazon AWS provides the Elastic Container Service for Kubernetes (Amazon EKS) and Microsoft Azure has the Azure Kubernetes Service (AKS). We are going to use Azure Kubernetes Service to run our containers.

Provisioning a Azure Kubernetes Service

1) Log on to the azure portal https://portal.azure.com. In the search text box, type in "Kubernetes". From the result listed, click on Kubernetes Services to see the list of kubernetes services.

2) Click on the Add button to create a new kubernetes service. We are just going to use default options. We filled in details of your kubernetes cluster and click on the button Review + create button.

Review the settings and click the Create button to create your AKS. It takes a few minutes to get a fully configured AKS to be set up.

Once the cluster is set up, you can view the kubernetes dashboard by clicking on "View Kubernetes dashboard" link and follow the steps in the page displayed. If you are not familiar with Kubernetes dashboard, it is a web based interface that displays all kubernetes resources.

Create a Kubernetes Secret

To enable our Kubernetes cluster to download images from a private DockerHub repository, we will set up a Kubernetes secret containing credentials for our DockerHub repo. To do this, click on the ">_" button to open a Cloud Shell session.

To create the secret run the following command

kubectl create secret docker-registry dockerhubcred --docker-server=https://index.docker.io/v1/ --docker-username=yourusernamee --docker-password=yourpassword --docker-email=youremailaddress

We can check the existence of the new secret by executing

kubectl get secret

Create a Kubernetes Service

In Kubernetes, Containers are "housed" in Pods, However, pods are mortal and can be recreated at any time. Therefore, the end points of containers are exposed through an abstraction called "Kubernetes Service".

Creation of kubernetes Service is a two stage process

1) Create a Kubernetes Deployment: A deployment specifies a template, which include details of docker image, port, etc., replication details i.e. how many pods would be deployed as well as metadata that contains information about how pods would be selected.

2) Create a Kubernetes Service. A service is deployed by specifying the selection criteria, port to be exported and service type.

To perform the above-mentioned steps, we created the following yaml file.

apiVersion: v1

kind: Service

metadata:

name: aspnetvuejs

labels:

app: asapnetvuejs

spec:

type: LoadBalancer

ports:

- port: 80

targetPort: 8080

protocol: TCP

name: port-80

selector:

app: aspnetvuejs

---

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: aspnetvuejs

spec:

replicas: 2

template:

metadata:

labels:

app: web

spec:

containers:

- name: private-reg-container

image: aspnetvuejs/web:latest

ports:

- containerPort: 8080

imagePullSecrets:

- name: docker-registry

To run the yaml file, I executed the following series of command on my Cloud Shell session

1) Create the yaml file by executing

vim oneservice.yaml

Copy the yaml content above and save the file.

2) Create the service and deployed by running

kubectl apply -f oneservice.yaml

The service and deployment is now created. To view your service, I typeed in the following

kubectl get service

The public IP address of the service is displayed in the list of service shown in the result. The deployed web web application can be viewed by typing in the public Ip address.

Conclusion and next steps

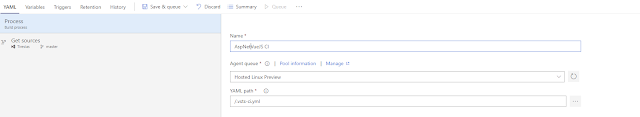

In this post, we created a kubernetes service by declaring kubernetes objects in a yaml file and running them using Cloud Shell. This is useful in explaining the steps and understanding what is involved. However, in reality we would want to do it in a well-defined deployment process. In my next post, I will explain how to deploy to a kubernetes service using VSTS release management.